What is Multimodal AI?

Multimodal systems refer to the application of artificial intelligence or machine learning in finding, processing, and combining data like video, text (clinical notes, reports, patient histories), images (histopathological slides, ultrasounds, MRIs), PDFs, audio (organ sounds from digital stethoscopes), sensors (wearables, bedside monitors), and other modalities from varied sources. In the context of healthcare, such richer datasets may include electronic health or medical records (EHRs or EMRs), monitor or sensor readings, scans and images, proteomic, genomic, genetic, or single-cell data, and more for a comprehensive analysis.

The AI models in medtech solutions are based on architectures that integrate each data type after performing preprocessing operations. Because of the ease of integration, and understanding, as well as swift and accurate outcomes generated, these models are applied across personalized medicine, triage, early disease diagnosis, prognosis, clinical trial design, etc. Multimodal AI (MMAI) models like Med-Pal M can perform various tasks, such as image recognition (X-rays, CT scans), speech recognition, and clinical text language translation, and capture complex interactions across medical contexts.

Healthcare workers utilize such data to improve decision-making and enhance diagnostic accuracy. These platforms amalgamate diverse data as compared to single-modality models and produce a deeper understanding of the patient’s overall health. To visualize the comparative benefits, consider an AI-based model designed through AI development services that analyze knee X-ray images of a patient.

Alongside the images, it would also evaluate electronic health records and historical data of the patient, including descriptions of symptoms, laboratory test findings, etc. Medical specialists and radiologists can then thoroughly assess the condition for arthritis or other bone-related conditions, rather than limiting their diagnosis and treatment exclusively to images.

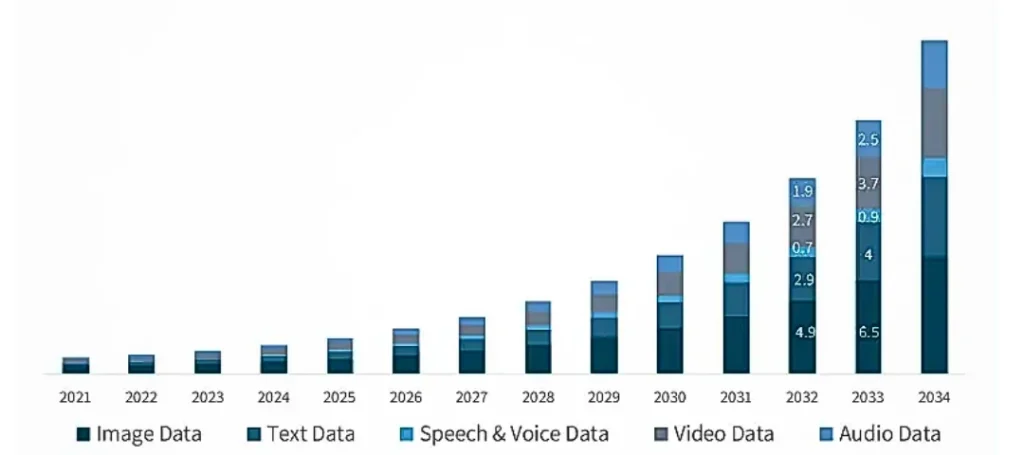

The current global market of multimodal AI in healthcare is estimated to be around US $1.6 billion as of 2024 and is expected to surge and reach an approximate value of US $27 billion by 2034, increasing at a CAGR of 32.7% during this forecast period. Let us now go through the potential of AI models running on multimodal data and how medical professionals can unlock the same in this blog.

Source: Global Market Insights

Growing market size of multimodal AI during the forecast period 2021 to 2034

Functions of AI in Healthcare Diagnostics

There is a plethora of applications of MMAI in the healthcare industry that have improved patient outcomes, improved diagnoses, and enhanced personalized treatment over time. This is because the models run on fused outputs of neural networks that are separately trained for texts, videos, images, structured data, and more.

Using PyTorch or TensorFlow frameworks enables the flexibility of customizing clinical trials. This involves early-stage AI breast cancer detection by fusing information gained through AI for medical imaging with genomic profiles, forecasting progression of a kidney disease by combining patient demographics with test results, predicting diabetes conditions by integrating lifestyle data with blood biomarkers, and so on.

For example, an AI medical diagnosis model utilizes a vision transformer for analyzing clinical texts, and different types of neural networks, like a convolutional neural network to evaluate MRI scans, as well as a feedforward network for assessing lab test results. It then brings forth the early (raw data) or lately fused (processed features) embeddings with the support of concatenation or attention mechanisms to produce a well-informed diagnosis and treatment plan. Given below are a few such important roles of these models.

Drug Discovery

These models have proved to be useful for discovering target molecules and genetic entities related to a disease in the drug development process, a crucial part of the pharmaceutical industry, and computer vision in healthcare. MMAI swiftly correlates protein interactions and gene expression with phenotypic results shown in medical imaging data or lab outcomes, leading to accelerated identification of biomarkers and targets. Researchers organize, structure, and analyze the assembled multimodal data using AI models and further prioritize, collaborate on, and scale drug targets and design mimics. This not only helps in the development pipeline but also enables necessary early intervention through therapeutic medical equipment.

Disease Intervention

AI in healthcare diagnostics is useful in early disease detection, as these models can integrate imaging data, EHRs or EMRs, molecular diagnostics, genetic profiles, and more for disease pattern recognition in humans. The early onset of chronic diseases like cancer, cardiovascular conditions, diabetic retinopathy, Parkinson’s disease, etc. can be identified prior to the appearance of symptoms. This paves the way for preventive intervention and effective treatment with continuous feedback using agentic AI solutions.

Trial Framework

These models are useful for designing efficacious clinical trial data analysis, as they enable result predictions, better patient monitoring, and even selection. They combine various forms of structured patient data, such as behavioral data from mobile or wearable health monitoring devices, EHR, lab outcomes, genomic, proteomic, medical imaging data, etc., to choose apt patients or candidates that are likely to respond during trials. The overall regulatory success increases while the costs and timelines are minimized, as MMAI can also continuously evaluate safety and efficacy trends for researchers to adapt accordingly.

Individualized Treatment

AI medical diagnosis has also proved to be useful in personalized treatment, as these models can categorize patients as per their lifestyle information, genetic profiles, clinical history, biometrics, etc. Medical professionals can analyze huge multimodal datasets using these models and create tailored solutions, such as for assisted reproduction treatment. Any risks of reactions from common medicines are effectively reduced while therapeutic outcomes are improved as a holistic view of every patient is considered.

Others

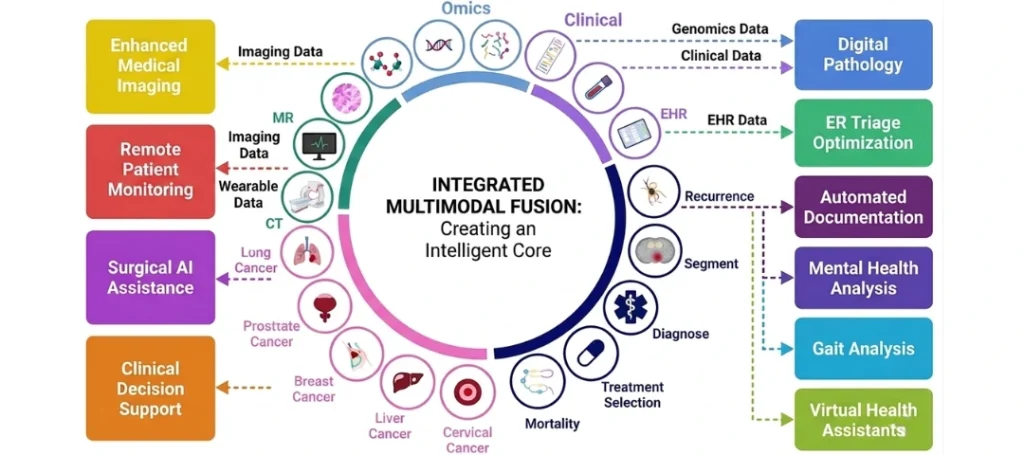

Further, multimodal large language model also improves radiology diagnostics as it integrates clinical notes with the images for pointing out errors and detecting abnormalities, lesions, or anomalies. The information related to histopathology slides and genomics can be combined for better prognosis and disease classification. These are also competent for combining wearable sensor data with EHRs and patient-reported symptoms to monitor and detect health issues remotely. Clinical decision support systems reduce the cognitive load of physicians by generating structured data for rapid risk assessments and AI medical diagnostics suggestions. Moreover, virtual health assistants powered by MMAI swiftly analyze multimodal inputs, such as vital signs, triage notes, etc. to support emergency diagnostics in critical care settings.

The integrated multimodal AI in healthcare ecosystem in the context of cancer treatment

Benefits of Using Multimodal AI in Healthcare

As we are aware now, artificial intelligence or machine learning-based multimodal AI models integrate molecular, behavioral, clinical, genetic, or proteomic data to support a more nuanced view of diseases and patient health. Apart from bridging the gap between varied data types in the healthcare industry, given below are the various advantages of multimodality AI model deployment.

1. Patient Outcomes: These models enable a holistic understanding of the patient’s health and enable targeted treatment approaches. They draw on patient datasets and perform in-depth analysis of individual therapy responses, reactions, and risks to align further care. As a result, treatment efficacy is enhanced, adverse reactions are reduced, and early interventions are made possible.

2. Precise Diagnostics: Multimodal AI in healthcare analyzes different types of data and their sources simultaneously to assist clinicians with diagnostic certainty and counter-disease strategies. A complete view of the patient’s health conditions can be useful in identifying rare or complex medical conditions that might not be highlighted through single-modality planning.

3. Drug Development: MMAI uplifts research teams by pinpointing non-viable candidates, proposing drug interactions, alongside synthesizing new molecular structures using generative AI services. It amalgamates clinical data, omics, and imaging results for recruiting patients, identifying targets, and designing clinical trials. This reduces costs on manual labor, increases success rates, and accelerates development timelines.

4. Clinical Documentation: Models using AI in healthcare diagnostics can understand spoken inputs from medical professionals and patients using Natural Language Processing (NLP), Retrieval-Augmented Generation (RAG), etc., and then combine them with real-time images. The system makes it easy to comprehend the situation, disease, symptoms, treatment, and interpret the data, thus streamlining clinical documentation, minimizing errors, and ensuring EHR maintenance.

Embrace the Future of Healthcare with KritiKal

In this blog, we covered the basics of AI or ML-based multimodal models that can easily build comprehensive and holistic patient health profiles, predict diagnoses, and generate personalized treatment plans by integrating multiple data types through our AI integration services like text, images, and speech. They showcase various benefits and use cases, including accurate and nuanced diagnostics, precision medicine, remote patient monitoring, device interoperability, accelerated drug discovery, and biomarker identification.

KritiKal excels at creating models that support real-time insights and continuously perform different types of unsupervised learning from patient data to suggest adaptive care and informed clinical decision-making. Our domain experts can build flexible, user-friendly, scalable, and future-ready systems that gain clinician and patient trust. Our multimodal AI implementation strategy involves interdisciplinary collaboration amongst data scientists, physicians, and all stakeholders while ensuring privacy, adherence to regulations in healthcare, security, and continuous model validation and refinement.

We assist you in overcoming the initial challenges of deploying these models in your day-to-day business operations, such as data integration and standardization across modalities, the black box issue of model explainability, privacy and security risks related to sensitive patient data, missing, noisy, and misaligned data handling, and high computational and resource requirements.

To conclude, AI-powered multimodal models are evolving at a faster pace in the healthcare domain, given the future directives of adaptive cross-modality fusion techniques and more. While our models ensure better diagnostic accuracy, their modular architectures enable flexibility, scalability, and better human-AI collaboration in clinical workflows for improved patient outcomes. Please get in touch with us at sales@kritikalsolutions.com to know more about our AI-based products, platforms and services and realize your business requirements.

Surbhi Navali currently works as a Software Engineer at KritiKal Solutions. She is proficiently skilled in developing and deploying solutions based on generative AI, agentic AI, computer vision, and LLMs. With her ability to work efficiently in teams, she has assisted KritiKal in delivering various projects to some major clients.

Global

Global  United States

United States