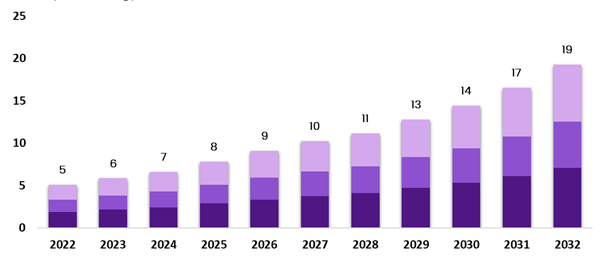

Face detection and alignment is a part of face analysis that estimates a set of specific points defining a particular face. The estimation includes eyes, eyebrows, nose, jaw, lips, and mouth. The process of identification of location of landmarks or key points in images of human faces is called landmark detection and in videos is called landmark tracking. A lot many times, face misalignment can be seen as a common problem when images are taken at different time points or varied imaging modalities through non-invasive imaging systems. This brings forth the need for efficient, quick and accurate face alignment technology. Facial alignment and recognition market across the globe was valued at $4.35 billion as of 2019 and is expected to increase at a CAGR of 14.8%, to reach a market value of about $19.92 billion by 2032.

Rising market size of face detection and alignment solutions from 2022 to 2032

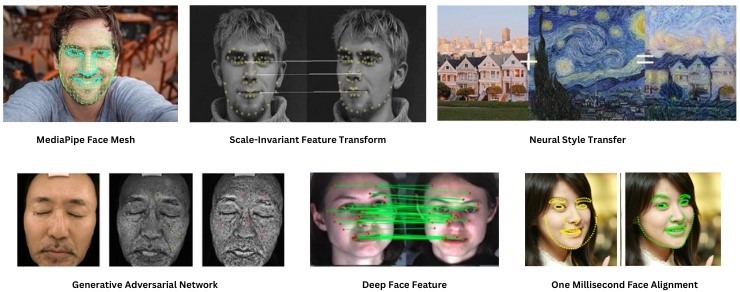

Approaches to Face Detection and Alignment

There can be various approaches to automate facial alignment and even build algorithms to align faces for a direct comparison between images before and after model execution, such as these:

- MediaPipe Face Mesh: This type of landmark detection model identifies a set of 468 facial landmarks including points for alignment of the eyes, nose, mouth, and other facial features, which further can be used to transform and align faces. By using the detected facial landmarks, one can perform face alignment that involves transforming and warping the unmasked input image so that the facial features are in a standardized position. An output example would be red coloured pixel coordinates in the resulting image where there is difference in the pixel coordinate values. Another example of similar nature can be Dlib’s 68-point facial landmark detector.

- Scale-Invariant Feature Transform: SIFT is a feature detection algorithm used in computer vision for KeyPoint extraction and matching. It identifies distinctive, invariant features across scales and orientations, enabling consistent alignment of the eyes and other facial features like nose, and mouth. Using a difference-of-Gaussian function, it detects points of interest, assigns orientations based on gradient histograms (Dlib’s HOG-based face detector), and normalizes feature vectors for light invariance. The BBF algorithm finds nearest neighbors, eliminating false matches using distance ratios.

- Neural Style Transfer: This optimization technique blends a content image and a style reference image by separating style and content representations in different types of neural networks such as convolutional neural networks like MTCNN face alignment. A loss function computed using Gram Matrix minimizes differences between the content image, style reference, and generated image. Content reconstruction retains high-level content, while style reconstruction computes feature correlations. Higher network layers capture content, and lower layers focus on pixel values.

- Generative Adversarial Network: This 3D face alignment architecture trains deep learning-based generative models, consisting of a generator and a discriminator. The generator creates new, realistic examples, while the discriminator classifies images as real or fake. Both models train with the discriminator’s improvements reducing the generator’s effectiveness and vice versa. GANs excel at image synthesis, leveraging additional information like class labels in some datasets.

- Deep Face Feature: The model is trained using face images rendered from various views, enabling it to extract a feature vector for each pixel of a face image. DFF captures the global structural information of face images, effectively distinguishing different facial regions and outperforming general-purpose feature descriptors in face-related tasks like matching and alignment. This novel feature training method represents one of the types of unsupervised learning, and leverages ground-truth correspondence between face images with different poses and expressions in the training set, ensuring the deep face features have similar values for corresponding semantic pixels across different face images. Other Deep Learning-based detectors include Multi-task Cascaded Convolutional Networks (MTCNN for face alignment), Single Shot MultiBox Detector (SSD), You Only Look Once (YOLO), Google’s FaceNet.

- Others: These may include Haar Cascades that uses a cascade of simple features to detect faces, but which are not very efficient when images are taken in complex backgrounds or with varied lighting conditions, such as face recognition using OpenCV cv2.CascadeClassifier. Also, Faster R-CNN, a region-based CNN that detects faces by generating region proposals and refining them with high precision and recall. This is usually used for computer vision object detection, but can be trained for human detection. There are also Active Shape Models (ASM) and Active Appearance Models (AAM) that are statistical models that iteratively adjust a face shape model to fit a detected face, thus they are apt for capturing shape variations but are sensitive to initialization and can be computationally intensive. Another efficient method is Kazemi and Sullivan’s one millisecond face alignment which is a rapid and accurate face alignment method using regression trees implemented in Dlib, but may not handle extreme poses or occlusions well.

Types of Approaches of face alignment for face recognition

Applications of 3D Face Alignment

Being foundational technologies of computer vision, face detection and alignment have a wide range of applications and cater to many industries. Given below are some examples:

Security and Surveillance

Facial alignment for face recognition can be used to identify individuals for securing access in buildings, airports, events, stadiums, subways and other sensitive and crowded areas. The existing video surveillance systems can be enhanced with AI video analytics showcasing features such as automatic detection and facial recognition in real-time. It can also be seamlessly integrated in surveillance drones with collision avoidance. This is very beneficial for monitoring public spaces and recognizing people of interest, culprits, suspects, missing person by matching surveillance footage with database of known individuals etc.

BFSI

Biometric authentication is now considered as a basic feature in all smartphones, laptops, tablets and other devices for securely logging via face alignment for face recognition, instead of traditional password-based method. Face alignment for face recognition is also used in payment systems to authorize financial transactions, thus acting as an additional layer of security, for example, ADCB FacePass.

Healthcare

It plays a vital role as an application of computer vision in healthcare. Recognizing facial expressions during patient monitoring through vision systems helps to assess their level of pain, discomfort, side effects of medication, emotions related to current health etc. all of which can be used to adjust therapeutic settings. The system is also used for timely diagnosis of genetic disorders that may manifest through distinctive facial features through landmark analysis. These can also be integrated in smart homes as elderly care and disabled people’s assistance, human action recognition and well-being monitoring systems that detect falls, distress through their facial expressions and alerts the caretakers.

Enhanced access management with face detection and alignment

Retail

In the retail industry, face alignment can be used to compare specific facial features including age, wrinkles, pores, spots etc. over a period of time. It can be used for personalized advertising on the basis of age, gender, emotions of customers in the stores. By analysing customers’ facial expressions, their reactions and feedback to products and services can be gauged without having to engage them in related processes. This helps to gain valuable insights into the performance of the product or services for devising better business strategies without taking the customers’ time. Customers can also virtually try on products like glasses, makeup, jewelry, accessories, hairstyle and more, online on an e-commerce website such as Lenskart, Sephora etc. that utilize these technologies for detecting and aligning customers’ faces for a realistic preview. Some enhanced product search and recommendation engines also use this technology to detect customers’ facial characteristics, emotions, showcase preferred products for enhanced and personalized shopping experience.

Entertainment

One can observe plethora of augmented reality-based facial filters on social media apps like Snapchat, Instagram etc. These filters basically overlay effects on users’ faces in real-time and are powered by face alignment for face recognition technology. In the gaming industry, this also enables realistic facial animations for user avatars in the AR/VR environment for an intuitive and immersive experience. Another application is scanning and aligning the players’ face for creation of closely resembling avatar or character customization in video games. It also enables motion capture that enhances the realism of characters in films and games by using face alignment to accurately capture actors’ facial movements. Social robots like Sophia have been developed by using this technology that can recognize and interact with humans by detecting and aligning faces and produce appropriate responses based on their expressions to make natural conversations possible.

Automotive

Alignment of the eyes in specific can be used for drowsiness or distraction detection in drivers’ face, to provide alerts to the driver and prevent accidents. The system can be used to identify the driver for authorizing access to further vehicle operations, for example, Bosch solutions.

Education

Such technologies can improvise the performance of remote learning platforms by detecting and analyzing students’ facial expressions to gauge their engagement and grasp around the subject, such that teachers can adjust their teaching methods according to that. It is also used to proctor online exams, identify the examinee and monitor for suspicious behavior, unfair practices etc. This technology can be used to facilitate clinical trial data analysis and academic research for psychological studies by analysing facial expressions to study human emotions and social interactions. It can be used to study human behaviour and analyse how customers, subjects or people respond to various stimulation based on their facial expressions.

Others

It also improves auto-focus, image enhancement in cameras by detecting, aligning faces, ensuring the subject is in focus, well-lit and aids in post-processing by allowing for more precise adjustments to facial features, like smoothing skin or adjusting lighting in photography and videography fields. It enhances the functionality of virtual assistants by enabling them to read and respond to users’ emotions.

Conclusion

KritiKal Solutions can assist you to excel in the competitive market by leveraging its cutting-edge AI-based 3D face alignment services which are applicable across various industries. It offers real-time insights into customer sentiment analysis, theft and crime prevention, access management, on-premises security in banks, visitor face recognition and registration, geo-fencing in sensitive areas, face alignment, detection and recognition-based automatic engine ignition, musical instrument learning applications, people counting, and more. It develops agnostic technologies that seamlessly integrate with the clients’ database, conducting accurate facial alignment and signature analysis within milliseconds, even with low-resolution and wide-angle images.

Avish Jain currently works as a Senior Software Engineer at KritiKal Solutions. He has over 3+ years of experience working in this field and is proficiently skilled in C, C++, Python, Embedded Systems, Android Automotive, Linux, Yocto, and more. He has helped KritiKal with timely delivery of projects to some major clients.

Global

Global  United States

United States